Cambridge, Massachusetts, May 25 2013

Dear Paul:

Back in the late 1980s, you helped shape the concept of an emerging market

debt overhang. The financial crisis has laid bare the fact that the dividing line between emerging markets and advanced countries is not as crisp as once thought. Indeed, this is a recurring theme of our 2009 book,

This Time is Different: Eight Centuries of Financial Folly. Today, the growth bind of advanced countries in the periphery of the eurozone has a great deal in common with that of emerging market economies of the 1980s.

We admire your past scholarly work, which influences us to this day. So it has been with deep disappointment that we have experienced your spectacularly uncivil behavior the past few weeks. You have attacked us in very personal terms, virtually non-stop, in your

New York Times column and blog posts. Now you have doubled down in the

New York Review of Books, adding the accusation we didn't share our data. Your characterization of our work and of our policy impact is selective and shallow. It is deeply misleading about where we stand on the issues. And we would respectfully submit, your logic and evidence on the policy substance is not nearly as compelling as

you imply.

You particularly take aim at our 2010 paper on the long-term secular association between high debt and slow growth. That you disagree with our interpretation of the results is your prerogative. Your thoroughly ignoring the subsequent literature, however, including the International Monetary Fund's work as well as our own deeper and more

complete 2012 paper with Vincent Reinhart, is troubling. Perhaps, acknowledging the updated literature-not to mention decades of theoretical, empirical, and historical contributions on drawbacks to high debt-would inconveniently undermine your attempt to make us a scapegoat for austerity. You write "

Indeed, Reinhart-Rogoff may have had more immediate influence on public debate than any previous paper in the history of economics."

Setting aside this wild hyperbole, you never seem to mention our other line of work that has surely been far more influential when it comes to responding to the financial crisis. Specifically, our 2009 book (released before our growth and debt work) showed that recoveries from deep systemic financial crises are long, slow and painful. This was not the common wisdom at all before us,

as you yourself have acknowledged on more than one occasion. Over the course of the crisis, and certainly by 2010, policymakers around the world were using our research, alongside their assessments, to help justify sustained macroeconomic easing of both monetary and fiscal policy fronts.

Your desire to blame our later 2010 paper for the stances of some politicians fails to recognize a basic reality: We were out there endorsing very different policies. Anyone with experience in these matters knows that politicians may float a citation to an academic paper if it suits their purposes. But there are limits to how much policy traction they can get with this device when the paper's authors are out offering very different policy conclusions. You can refer to the appendix to this letter for our views on policy through the financial crisis as they were stated publicly in real time. We were not silent.

Very senior former policy makers, observing the attacks of the past few weeks, have forcefully explained that real-time policies are very seldom driven to any significant extent by a single academic paper or result.

It is worth noting that in the past, polemicists have often pinned the austerity charge on the International Monetary Fund for its work with countries having temporary or permanent debt sustainability issues. Since its origins after World War II, IMF programs have almost always involved some combination of austerity, debt restructurings, and structural reform. When a country that has been running large deficits is suddenly no longer able to borrow new funds, some measure of adjustment is invariably required, and one of the IMF's usual roles has been to serve as a lightning rod. Even before the IMF existed, long periods of autarky and hardship accompanied debt crises.

Now let us turn to the substance. The events of the past few weeks do not change basic facts and fundamentals.

Some Fundamentals on Debt

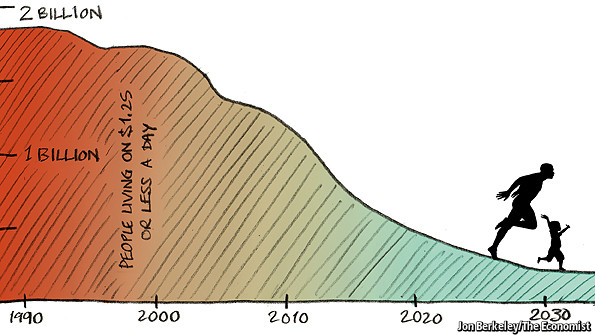

First, the advanced economies now have levels of debt that surpass most if not all historic episodes. It is public debt and private debt (which often becomes public as a crisis unfolds). Significant shares of these debts are held by foreigners in most cases, with the notable exception of Japan. In Europe, where the (public and private) external debt exposures loom largest, financial de-globalization is well underway. Debt financing has become an increasingly domestic business and a difficult one when the pool of domestic saving is limited.

As for the United States: our only short-lived high-debt episode involved WWII debts, which were held by domestic residents, not fickle international investors or central banks in China and elsewhere around the globe. This observation is not meant to suggest "a scare" in the offing, with bond vigilantes driving a concerted sell-off of Treasuries by the rest of the world and a dramatic spike US in interest rates. Carmen's work on financial repression suggests a different scenario. But many emerging markets have stepped into bubble-like territory and we have seen this movie before. We should not take for granted their prosperity that makes possible their continuing large-scale purchases of US debt. Reversals are possible. Sensible risk management means planning for these and other contingencies that might disturb today's low global interest rate environment.

Second, on debt and growth. The Herndon, Ash and Pollin paper, using a different methodology, reinforces our core result that high levels of debt are associated with lower growth. This fact has been hidden in the tabloid media and blogosphere discourse, but this point is made plain by even a cursory look at the full set of results reported in the very paper they critique. More importantly, the result was prominently featured in our

2012 Journal of Economic Perspectives paper with Vincent Reinhart on Debt Overhangs, which they do not cite. The main point of our 2012 paper is that while the difference in annual GDP growth between high and lower debt cases is about one percent a year, debt overhang episodes last on average 23 years. Thus, the cumulative effect on income levels over time is significant.

Third, the debate of the last few weeks does not change the fact that debt levels above 90% (even if one entirely rejects this marker for gross central government debt as a common cross-country "threshold") are very rare altogether and even rarer in peacetime. From 1955 until right before the recent crisis, advanced economies spent less than 10% of those years at a debt/GDP ratio of higher than 90%; only about two percent of the years are above 120% debt/GDP. If governments thought high debt was a riskless proposition, why did they avoid it so consistently?

Debt and Growth Causality

Your recent April 29, 2013

NY Times blog

The Italian Miracle is meant to highlight how in high-debt Italy, interest rates have come down since the European Central Bank's well-placed efforts to act more as a lender of last resort to periphery countries. No disagreement there. However, this positive development is meant to re-enforce your strongly held view that high debt is not a problem (even for Italy) and that causality runs exclusively from slow growth to debt. You do not mention that in this miracle economy, GDP fell by more than 2 percent in 2012 and is expected to fall by a similar amount this year. Elsewhere you have stated that you are sure that Italy's long-term secular growth/debt problems, which date back to the 1990s, are purely a case of slow growth causing high debt. This claim is highly debatable.

Indeed, your repeatedly-expressed view that slow growth causes high debt but not visa-versa, is hardly supported by the recent literature on the subject. Of course, as we have already noted, this work has been singularly ignored in the public discourse of the past few weeks. The best and worst that can be said is that the results are mixed. A number of studies looking at more comprehensive growth models have found significant effects of debt on growth. We made this point in the

appendix to our New York Times piece. Of course, it is well known that the economic cycle impacts government finances and therefore debt (causation from growth to debt). Cyclically adjusted budgets have been around for decades, your shallow characterization of the growth-debt connection.

As for ways debt might affect growth, there is debt with drama and debt without drama.

Debt with drama. Do you really think that a country that is suddenly unable to borrow from international capital markets because its public and/or private debts that are a contingent public liability are deemed unsustainable will not suffer lower growth and higher unemployment as a consequence? With governments and banks shut out from international capital markets, credit to firms and households in periphery Europe remains paralyzed. This credit crunch has a crippling effect on growth and employment with or without austerity. Fiscal austerity reinforces the procyclicality of the external and domestic credit crunch. This pattern is not unique to this episode.

Policy response to debt with drama. On the policy response to this sad state of affairs, we stress that restoring the credit channel is essential for sustained growth, and this is why there is a need to write off senior bank debt in many countries. Furthermore, there is no reason why the ECB should buy only sovereign debt-purchases of senior bank debt along the lines of the US Federal Reserve's purchases of mortgage-backed securities would be instrumental in rekindling credit and working capital for firms. We don't see your attraction to fiscal largesse as a substitute. Periphery Europe cannot afford it and for Germany, which can afford it, fiscal expansion would be procyclical. Any overheating in Germany would exert pressure on the ECB to maintain a tighter monetary policy, backtracking some of the progress made by Mario Draghi. A better use of Germany's balance sheet strength would be to agree on faster and bigger haircuts for the periphery, and to support significantly more expansionary monetary policy by the ECB.

Debt without drama. There are other cases, like the US today or Japan since the mid-1990s, where there is debt without drama. The plain fact that we know less about these episodes is a point we already made in our New York Times piece. We pointedly do not include the historical episodes of 19th century UK and Netherlands among these puzzling cases. Those imperial debts were importantly financed by massive resource transfers from the colonies. They had "good" high-debt centuries because their colonies did not. We offer a number of ideas in our 2012 paper for why debt overhang might matter even when there is no imminent collapse of borrowing capacity.

Bad shocks do happen. What is the foundation for your certainty that as peacetime debt hits new records in coming years, the United States will be able to engage in forceful countercyclical fiscal policy if hit by a large unexpected shock? Furthermore, do you really want to find out the answer to that question the hard way?

The United Kingdom, which does not issue the reserve currency, is more dependent on its financial sector and suffered a bigger banking bust, has not had the same shale gas revolution, and is more vulnerable to Europe, is clearly more exposed to the drama scenario than the US. And yet you regularly assert that the situations in the US and UK are the same and that both countries have the costless option of engaging in an open-ended fiscal expansion. Of course, this does not preclude high-return infrastructure investments, making use of the public balance sheet directly or indirectly through public-private partnerships.

Policy response to debt without drama. Let us be clear, we have addressed the role of somewhat higher inflation and financial repression in debt reduction in our research and in numerous pieces of commentary. As our appendix shows, we did not advocate austerity in the immediate wake of the crisis when recovery was frail. But the subprime crisis began in the summer of 2007, now six years ago. Waiting

10 to 15 more years to deal with a festering problem is an invitation for decay, if not necessarily an outright debt crisis. The end may not come with a bang but with a whimper.

Scholarship: Stick to the facts

The accusation in the

New York Review of Books is a sloppy neglect on your part to check the facts before charging us with a serious academic ethical infraction. You had already implicitly endorsed this from your perch at the

New York Times by posting a

link to a program that treated the misstatement as fact.

Fortunately, the "Wayback Machine" crawls the Internet and periodically makes wholesale copies of web pages. The debt/GDP database was first archived in

October 2010 from Carmen's University of Maryland webpage. The data migrated to

ReinhartandRogoff.com in March 2011. There it sits with our other data, on inflation, crises dates, and exchange rates. These data are regularly sought and found for those doing research who care to look. The greater disclosure of debt data from official institutions is testament to this. The IMF began to construct historical public debt data only after we had provided a roadmap in the list of our detailed references in a 2009 book (and before that in a

2008 working paper) that explained how we had unearthed the data.

Our interaction with scholars and practitioners working on real world questions in our field is ongoing, and our doors remain open. So to accuse us of not sharing our data is an unfounded attack on our academic and personal integrity.

Recap

Finally, we attach, as do many other mainstream economists, a somewhat higher weight on risks than you do, as debts of all measure -- including old age liabilities, public debt, private debt and external debt -- ascend into record territory. This is not a conclusion based on one or two papers as you sometimes seem to imply, but rather on a long-standing body of economic research and extensive historical experience about the risks of record high debt levels.

You often cite John Maynard Keynes. We read Keynes, all the way through. He wrote How to Pay for the War in 1940 precisely because he was not blasé about large deficits - even in support of a cause as noble as a war of survival. Debt is a slow-moving variable that cannot - and in general should not - be brought down too quickly. But interest rates can change much more quickly than fiscal policy and debt.

You might be right, and this time might be, after all, different. If so, we will admit that we were wrong. Whatever the outcome, we intend to be there to put the results in proper context for the community of scholars, policymakers, and civil society.

Respectfully yours,

Carmen M. Reinhart and Kenneth S. Rogoff

Harvard University.

Appendix I. Reinhart and Rogoff: Selected interviews, op-eds, and media on the policy response to crisis

"Two prominent economists who published an acclaimed study last year of 800 years of national financial crises, "This Time Is Different," see flaws on both sides of today's argument. The debt must be dealt with, they say, but not too fast."

Paul Krugman, New York Times, August 18, 2010 (Citing from McClatchy article

) "

Rogoff: We may need another stimulus bill just to decompress from the previous one, a smaller one to cushion the landing. Reinhart: I'm not one of those deficit hawks.... I'm not saying you run out and pull the plug and have an adjustment that could derail what fragile recovery we do have. Good for them."

Top Culprit in the Financial Crisis: Human Nature,

Barrons, November 24, 2012, by Lawrence C. Strauss

Reinhart: "...

the thrust in a deep financial crisis, when you throw in both monetary and fiscal stimulus, is to come up with something that helps raise the floor. That's why the decline wasn't 10% or 12%. However, one area where policy really has left a bit to be desired is that both in the U.S. and in Europe, we have embraced forbearance. Delaying debt write-downs and delaying marking to market is not particularly conducive to speeding up deleveraging and recovery."

Rogoff: "...if you didn't just raise taxes or cut taxes but actually fixed the tax system, that would be very important....And, lastly, other things, like infrastructure and education spending, are important. This isn't all about austerity versus no austerity. Countries that are successful in dealing with these crises, such as Sweden, sometimes take them as an opportunity to change. We haven't."

Reinhart Testimony before Senate Budget Committee, February 9, 2010.

"In light of the likelihood of continued weak consumption in the U.S. and Europe, rapid withdrawal of stimulus could easily tilt the economy back into recession. To be sure, this is not the time to exit. It is, however, the time to lay out a credible plan for a future exit."

5 Myths about the European debt crisis, by Carmen Reinhart and Vincent Reinhart,

Washington Post, May 9, 2010

Myth #3: Fiscal austerity will solve Europe's debt difficulties.

"But fiscal austerity usually doesn't pay off quickly. A large and sudden contraction in government spending is almost sure to shrink economic activity as well. This means tax collections fall and unemployment and welfare benefits rise, undermining efforts to reduce the deficit. Even if new borrowing is reduced or eliminated, it takes time to whittle down a large debt, and international investors are notoriously impatient."

"One of the main goals of financial repression is to keep nominal interest rates lower than would otherwise prevail. This effect, other things being equal, reduces governments' interest expenses for a given stock of debt and contributes to deficit reduction. However, when financial repression produces negative real interest rates and reduces or liquidates existing debts, it is a transfer from creditors (savers) to borrowers and, in some cases, governments."

"The current strategy that calls for years of austerity and recession in the periphery countries is just not tenable."

The Euro's Pig-Headed Masters (Kenneth Rogoff,

Project Syndicate, June 2011)

"

Instead of restructuring the manifestly unsustainable debt burdens of Portugal, Ireland, and Greece (the PIGs), politicians and policymakers are pushing for ever-larger bailout packages with ever-less realistic austerity conditions."

The Economy and the Candidates, Wall Street Journal Report with Maria Bartiromo, October 21, 2012 (interview with Kenneth Rogoff)

Min 2:40 on Fiscal Cliff "

Hopefully we won't commit economic suicide by actually putting in all that tightening so quickly." I like to see something like Simpson Bowles....If we did, we could have our cake and eat it too, we could have more revenue without hurting growth."

Kenneth Rogoff on Economy, European Debt Crisis, Bloomberg Surveillance, July 27, 2012, Interviewer Tom Keene: "

You told me five years and change ago that we would need four trillion dollars of stimulus to get through this" min 7:55: "

yes to great infrastructure projects, but not to just digging ditches"

The Bullets Yet to Be Fired, Financial Times, August 8, 2011

(by Kenneth Rogoff)

"In the case of Europe, this involves very large debt write downs in the smaller periphery countries, combined with a German guarantee of central government debt in the rest....In the case of the US, policymakers need to offer schemes to write down underwater mortgages....there is still the option of trying to achieve some modest deleveraging through moderate inflation of say 4 to 6 per cent for several years.....Last but not least, monetary and financial solutions must be buttressed by structural reforms...."

Interview with Charlie Rose, Business Week, December 2012,

CR: Does this economy need further stimulus? KR

: "

Certainly, withdrawing it at too rapid a rate in such a fragile economy makes no sense....We need to have areas where we spend money, like infrastructure, education."

Inflation is Now the Lesser Evil, Kenneth Rogoff, Project Syndicate, December 2008, "

It is time for the world's major central banks to acknowledge that a sudden burst of moderate inflation would be extremely helpful in unwinding today's epic debt morass."

Appendix II. Reinhart and Rogoff's support of Grand Bargain in Senator Tom Coburn's book "The Debt Bomb"

Failing to find evidence of extreme hawkish positions in interviews, op-eds and media appearances, some have claimed that we were much more hawkish in private. Any academic who has dealt with policymakers full well knows that if one's public and private positions are incongruous, it undermines one's impact.[1]

Senator Coburn's book gives his perspective and selected comments on one such private meeting, an April 5, 2011. It was an informal one hour breakfast meeting with forty Senators roughly evenly divided between Democrats and Republicans. The meeting was organized by the so-called "Gang of Six" centrist senators, three Democrats and three Republicans. The Gang of Six, of course, represented a unique bi-partisan effort to strike a long term budget deal in very toxic and polarized environment. We presume the meeting was kept off-the-record so comments so the senators could be as frank as possible, we did not care. We strongly supported the spirit of the Gang of Six and are proud of our role in helping them and the National Commission on Fiscal Responsibility.

The meeting began with Carmen Reinhart giving a fifteen minute presentation. The whole focus of the meeting was on how to approach a gradual move towards long-term fiscal sustainability.

The main ideas being discussed at the time were variants of the Simpson Bowles proposal, an ambitious attempt at a Grand Bargain, aiming to gradually reduce deficits over ten years, with a mix of tax increases, spending cuts and entitlement and tax reforms. In his book, Senator Coburn notes

"Neither Reinhart nor Rogoff said we could fix our debt problem with just tax increases. Both emphasized the need for comprehensive tax reform and tax code simplification. Reinhart said the mortgage interest deduction discourages savings, while Rogoff told me later, 'The current code is jalopy.'" The Debt Bomb, by Senator Tom Coburn, p. 30 (2012)."

Of particular importance to us, their proposal envisioned significant reform of the income tax system in a way that might have potentially have created a more efficient and fairer system that could have increased revenue with less growth-compromising distortions. Many others on both sides of the fence, including President Obama and later 2012 Republican Presidential candidate Mitt Romney endorsed such reforms. When Representative Ryan later cited our work in support of his counterplan, we did not endorse his plan, and continued to favor the Simpson-Bowles approach.

A couple of Senator Coburn's quotes from us at the meeting, taken without the full context of our introductory remarks have been interpreted as saying we endorsed immediately closing the budget. This was at odds with our position, notably our work on slow and often halting recoveries from financial crises, which we also emphasized. In fact, taking into account our opening remarks, it is our impression that the Senators full well understood the urgency we were expressing referred to adopting a long-term Grand Bargain a la Simpson Bowles.